Why my informal Twitter polls are so valuable

The trolls hate my Twitter polls because these polls invariably confirm what I claim. Are they right to claim these polls have no scientific value? No, and here's why.

Executive Summary

I get no end of grief from Twitter trolls who discount the significance of Twitter polls.

They really hate it when it shows they are wrong.

They criticize me for using the polls.

Why? Because they know it’s a very valuable tool for discovering the truth and they’d rather I limit my data to sources over which I have no control whatsoever and which I can’t verify (and which I am invariably never able to get source data). You can probably guess why someone interested in the truth would not want to be limited that way.

The bottom line is that if you are right about something, all the pieces of data, including Twitter polls, should align to your hypothesis or if they don’t, can be explained.

My use of Twitter polls

Generally, I use these polls in one of two ways:

As a quick way to see if there might be a signal worth investigating further

As a way to confirm my suspicions based on other evidence.

If you are careful about how to interpret these polls, they are a powerful technique for gathering ground truth data.

An example: Do vaccines cause autism?

This question has baffled scientists for decades because the mainstream medical community has gaslighted doctors so well with poorly designed or corrupt studies designed to conceal the effect.

When I looked at the underlying studies and collected first-hand data, it was very clear that autism can develop very quickly in about 30% of the cases and the parents notice a dramatic change over just 24 hours.

I call this “overnight autism.”

In those cases where overnight autism occurs, I know from parent surveys that nearly 30% of the “overnight autism” cases happen within 24 hours after a vaccine shot and never <24 hours before the shot.

That’s causation. Combined with everything we also know about autism and vaccines, there is no other possible explanation for that observation.

If vaccines didn’t cause “overnight autism,” then we’d see a very even distribution where the number of events happening every day after vaccination is constant.

What did my simple Twitter poll show?

I started the poll below at 1am PST which is when most of my troll friends are asleep.

Here’s a snapshot of the current poll as well as the poll results taken 12 hours earlier.

Do you see how the percentages are nearly identical?

This means that my “friends” didn’t game the poll results because once you hit 350 responses, the resulting percentages don’t really change much.

So what else can we learn from this?

10.5/38.3=27.4% of the cases were within 24 hours, very close to our 30% number from direct polling of previously identified autistic parents.

If the vaccine was perfectly safe and we looked at just the “instant autism” happening within 30 days after a shot, we’d expect 3.3% of cases per day, not 27.4%.

If we plug in the numbers, we find a p-value of < 0.00001.

This means the effect wasn’t random chance… something caused it.

In light of the fact that 27.4% agrees so closely with the 30% I previously had, I think it’s a pretty good bet that my anti-vaxxer followers are reporting accurately in the Twitter poll and the results are accurate.

Could this just be reporting bias?

Let’s play devil’s advocate and claim that the entire effect is imaginary and is caused by selection bias.

If autism is independent of vaccination date, this is the simplest way to explain the effect.

So if we assume the likelihood of autism is the same every day (since it isn’t related to the vaccine at all), but if the child is autistic on day 1, that parent is more likely to subscribe to the Steve Kirsch Substack.

Let’s see how the math needs to work out

It turns out that if someone is 72.6% less likely to sign up than the day before, 27.4% of my signups will be for parents whose child went overnight autistic on the first day.

The stats we’d then expect to see for the first 30 day distribution are:

Within 24 hours: 27.4%

Within first week (but not first day): 62%

Within first month (but not first week): 10.6%

Let’s multiply by .383 to get the numbers we are supposed to see in our spreadsheet (since 61.7% were none of the above):

Within 24 hours: 10.5%

Within first week (but not first day): 23.7%

Within first month (but not first week): 4.05%

Which doesn’t match.

So it seems like that “it’s reporter bias” hypothesis didn’t work out to explain the effect.

Could there be a “recall” bias that people only recall overnight autism when it happens right after a vaccine?

Could there be another effect like “recall bias” explaining the outcome?

No, because it would have similar statistics as to what we just did.

We are left with “the vaccine caused the effect” as the most likely explanation.

Parents of autistic kids won’t find that surprising at all.

How biased are my followers?

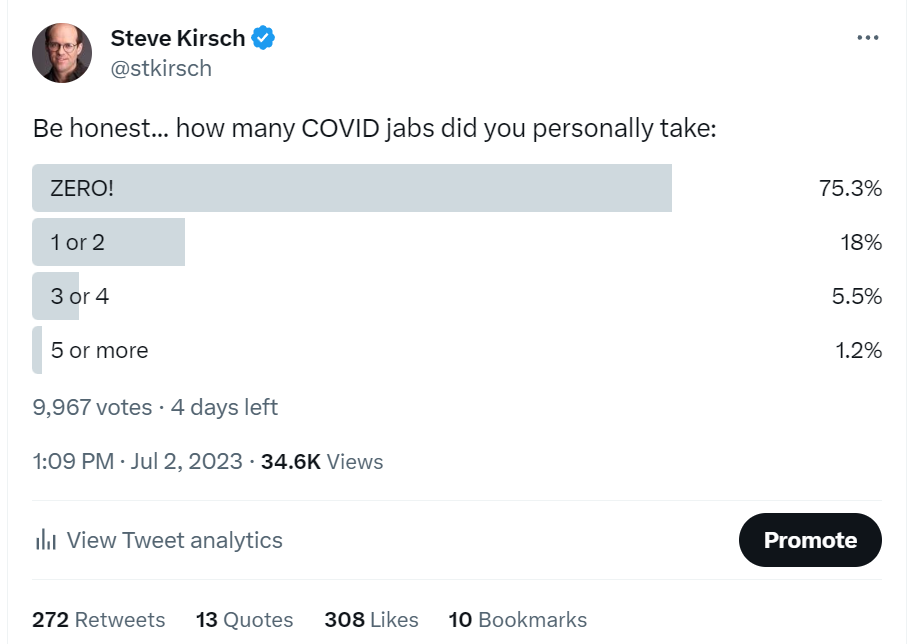

In case you were wondering, my followers are an inverted sample of America. In America, 75% are vaxxed. For my followers, 75% are unvaxxed.

The vaccination rate of my followers is irrelevant here since every child in the survey (in the three categories) was vaccinated.

Summary

Twitter surveys, when used carefully, can be a powerful tool for quickly assessing truth.

Unless I missed something, the only way to explain this Twitter survey (and all the rest of the autism data I’m aware of), is that vaccines cause autism.

It is yet another piece of data to throw in the mix.

Also, my latest survey data shows 28% had overnight autism within 24 hours of the shot. The Twitter survey gave 27.4%. Interesting, isn’t it?

I am a family physician (naturopathic) with 44 years experience. Many years ago, before personal computers, I began to see this “overnight autism” phenomenon. The moms of these children, usually males, were invariably told by the pediatrician that the autism or other damage was merely a coincidence. I began to search for safety studies. Surely, the CDC, NIH or FDA had done studies, like comparing 100 monkey babies given the vaccine schedule to 100 unvaccinated controls and followed these monkeys to see if anything occurred. I could not find any real or significant safety studies. Not even a monkey study. I grew increasingly skeptical.

Steve you are 100% correct. I had my first child the same day as a very close friend. We wetin hospital together. She gave all the vaccines to her child , I didn't give any. Unfortunatley her child is on the spectrum & has ADD & has struggled lots. At the time my friend did not hear me, she certainly regrets it now. It is very very sad.